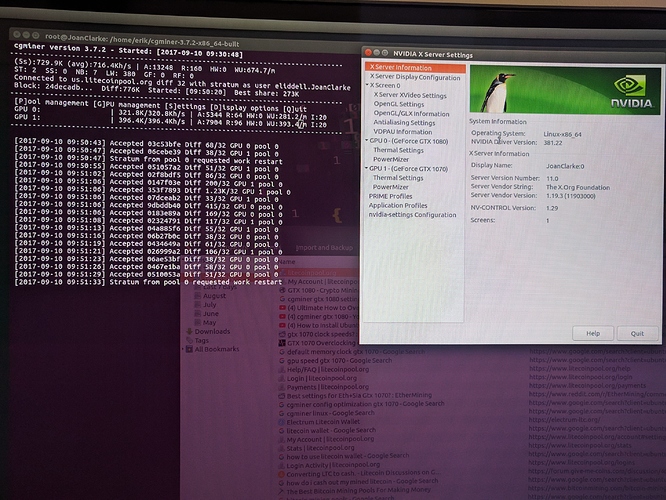

So i reinstaled ubuntu desktop and purged Nvidia, this allows me to log in normally but with awful resolution. I reinstalled nvidia (via ubuntu-drivers autoinstall ) and got caught in a login loop again but i can ssh in. while sshed in I am able to run hashcat (which is what this machine was originally built for) and it sees all my cards and gives me expected rates for hashcat. So thats good, at least i know my nvidia drivers and cards are working… can’t run ccminer, but thats because i have to reinstall cuda… doing that now and its taking a while… I have googled the hell out of “login loop ubuntu 16.04” and tried everything… had this problem before but the internet was a help… not this time…

ok cuda is installed and from my ssh i ran my scrypt

sh BTCMinerGate.sh

No protocol specified

Failed to connect to Mir: Failed to connect to server socket: No such file or directory

Unable to init server: Could not connect: Connection refused

ERROR: The control display is undefined; please run `nvidia-settings --help` for usage information.

No protocol specified

Failed to connect to Mir: Failed to connect to server socket: No such file or directory

Unable to init server: Could not connect: Connection refused

ERROR: The control display is undefined; please run `nvidia-settings --help` for usage information.

Power limit for GPU 00000000:01:00.0 was set to 100.00 W from 180.00 W.

Warning: persistence mode is disabled on this device. This settings will go back to default as soon as driver unloads (e.g. last application like nvidia-smi or cuda application terminates). Run with [--help | -h] switch to get more information on how to enable persistence mode.

All done.

*** ccminer 2.2.1 for nVidia GPUs by tpruvot@github ***

Built with the nVidia CUDA Toolkit 8.0 64-bits

Originally based on Christian Buchner and Christian H. project

Include some algos from alexis78, djm34, sp, tsiv and klausT.

BTC donation address: 1AJdfCpLWPNoAMDfHF1wD5y8VgKSSTHxPo (tpruvot)

[2017-09-13 08:42:04] Using JSON-RPC 2.0

[2017-09-13 08:42:04] Starting on stratum+tcp://bcn.pool.minergate.com:45550

[2017-09-13 08:42:04] 3 miner threads started, using 'cryptonight' algorithm.

[2017-09-13 08:42:06] Stratum difficulty set to 1063 (1.063)

[2017-09-13 08:42:07] GPU #1: GeForce GTX 1070, 8007 MB available, 15 SMX

[2017-09-13 08:42:07] GPU #1: 960 threads (9.875) with 60 blocks

[2017-09-13 08:42:07] GPU #0: GeForce GTX 1080, 7981 MB available, 20 SMX

[2017-09-13 08:42:07] GPU #0: 1280 threads (10.25) with 80 blocks

[2017-09-13 08:42:07] GPU #2: GeForce GTX 1070, 8007 MB available, 15 SMX

[2017-09-13 08:42:07] GPU #2: 960 threads (9.875) with 60 blocks

[2017-09-13 08:42:11] GPU #1: GeForce GTX 1070, 380.34 H/s

[2017-09-13 08:42:12] accepted: 1/1 (diff 1.604), 380.34 H/s yes!

[2017-09-13 08:42:12] accepted: 2/2 (diff 2.071), 380.34 H/s yes!

[2017-09-13 08:42:12] GPU #2: GeForce GTX 1070, 143.59 H/s

[2017-09-13 08:42:13] accepted: 3/3 (diff 5.416), 727.61 H/s yes!

[2017-09-13 08:42:13] accepted: 4/4 (diff 1.845), 727.61 H/s yes!

[2017-09-13 08:42:13] accepted: 5/5 (diff 2.587), 727.61 H/s yes!

[2017-09-13 08:42:13] GPU #0: GeForce GTX 1080, 172.27 H/s

[2017-09-13 08:42:13] accepted: 6/6 (diff 1.148), 899.89 H/s yes!

[2017-09-13 08:42:16] GPU #1: GeForce GTX 1070, 597.47 H/s

[2017-09-13 08:42:16] GPU #2: GeForce GTX 1070, 591.11 H/s

[2017-09-13 08:42:16] accepted: 7/7 (diff 1.606), 1691.42 H/s yes!

[2017-09-13 08:42:16] accepted: 8/8 (diff 1.290), 1691.42 H/s yes!

[2017-09-13 08:42:17] accepted: 9/9 (diff 1.728), 1691.42 H/s yes!

[2017-09-13 08:42:18] accepted: 10/10 (diff 3.287), 1691.42 H/s yes!

[2017-09-13 08:42:18] accepted: 11/11 (diff 1.178), 1691.42 H/s yes!

[2017-09-13 08:42:19] GPU #0: GeForce GTX 1080, 523.52 H/s

[2017-09-13 08:42:21] GPU #2: GeForce GTX 1070, 589.68 H/s

[2017-09-13 08:42:21] GPU #1: GeForce GTX 1070, 584.84 H/s

[2017-09-13 08:42:21] accepted: 12/12 (diff 2.204), 1693.22 H/s yes!

[2017-09-13 08:42:21] accepted: 13/13 (diff 1.318), 1693.22 H/s yes!

[2017-09-13 08:42:21] accepted: 14/14 (diff 1.354), 1693.22 H/s yes!

[2017-09-13 08:42:22] accepted: 15/15 (diff 2.176), 1693.22 H/s yes!

[2017-09-13 08:42:23] accepted: 16/16 (diff 9.434), 1693.22 H/s yes!

this is a minergate scrypt (i know but was getting results)

as you can see my rate is H/s instead of kH/s thats really bad…